Cursor launched its new in-house model, Composer 2, today and is claiming frontier-level coding performance. On Cursor’s own charts, the model scores very well on both CursorBench and Terminal-Bench 2.0, landing ahead of Opus 4.6 (high) and just behind GPT-5.4 (high). Those are still Cursor-presented numbers, so I’d treat them as promising rather than settled.

All of that goodness comes at a fraction of the cost. At just $0.50/1M input and $2.50/1M output tokens, Composer 2 is priced like an open-weight model. That’s a great deal!

But something interesting happened within the first few hours of release. Some users noticed that, when prompted for its model ID, the LLM identified itself as a variation of Kimi K2.5. Yes, the open-weight model from Moonshot AI that came out a month ago. If you haven’t tried it, you should—I was (and still am) very impressed by it.

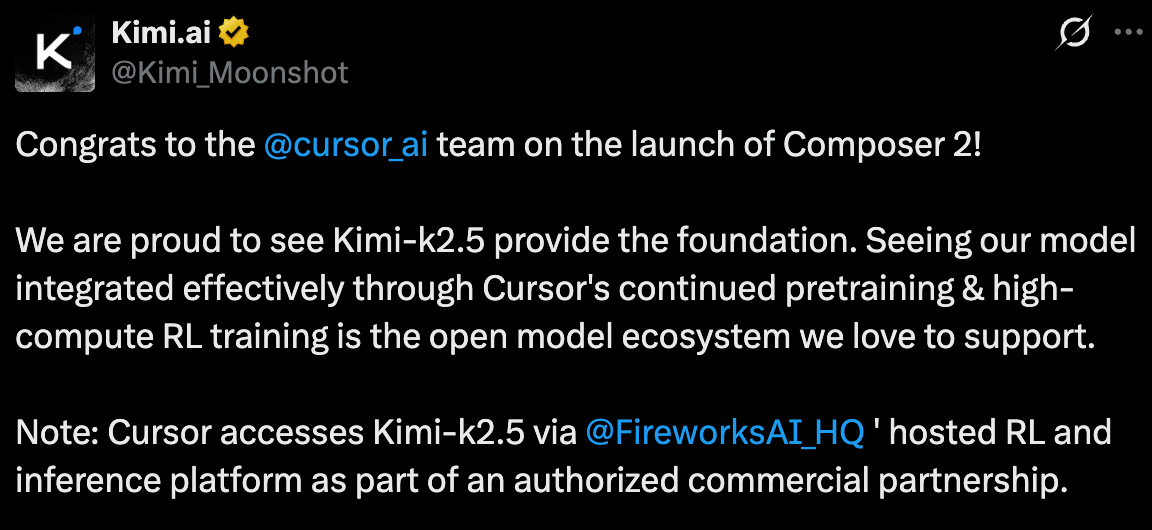

Anyway, hours later, Moonshot AI confirmed that Cursor’s use of the model was within the license terms. In other words: Composer 2 does appear to be built on Kimi K2.5, with Cursor adding enough post-training (via reinforcement learning) to turn it into a more specialized coding model.

I see a lot of comments on X and Hacker News downplaying the success of Cursor’s research team. That’s a mistake. This is an incredible achievement, and it’s reasonable to speculate that Cursor now has a repeatable playbook: take a strong open-weight model, then push it meaningfully further for coding through post-training. Like I noted in my previous analysis of the AI bubble, there is a clear trend here: the big players are charging too much while the gap between proprietary and open models keeps shrinking. The cost of intelligence is coming down—and company valuations might follow.

Happy prompting!

—Filip